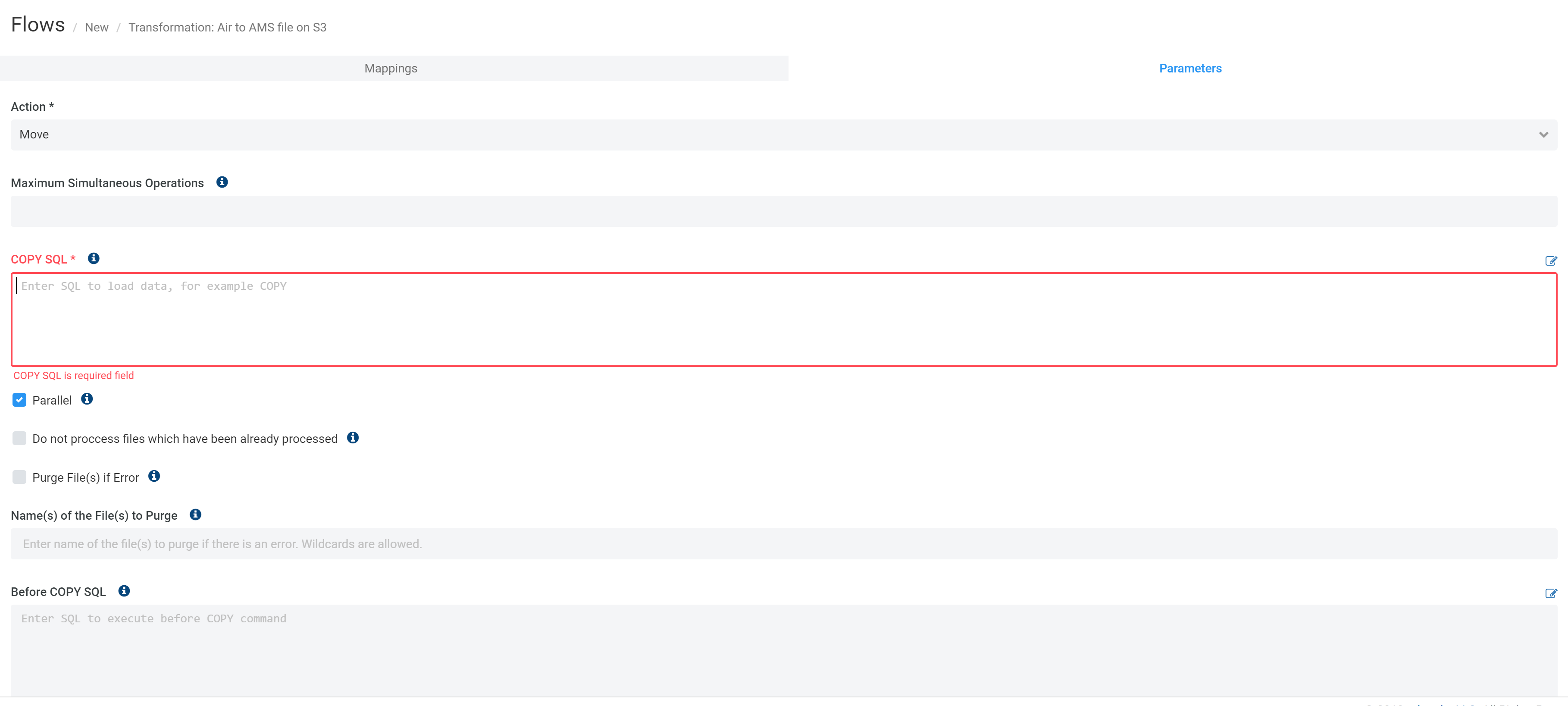

Aborted redshift copy command from s311/24/2023

That said, 20,000 rows is such a small amount of data in Redshift terms I'm not sure any further optimisations would make much significant difference to the speed of your process as it stands currently. this is so all nodes are doing maximum work in parallel. Use the loadfroms3toredshift function to use the Redshift COPY command to copy data files from an Amazon Simple Storage Service (S3) bucket to a Redshift table. That last point is significant for achieving maximum throughput - if you have 8 nodes then you want n*8 files e.g. The number of files should be a multiple of the number of slices in your.For optimum parallelism, the ideal size is between 1 MB and 125 MB after compression.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed